Everything Is Logarithms

Some connections between things, which I have not seen elsewhere. Maybe they mean something?

Some connections between things, which I have not seen elsewhere. Maybe they mean something?

I have spent a lot of time trying to think intuitively about calculus, Taylor series, divergent series, and things like that. Here are a couple things I realized at some point which I would like to have written down. (This is sort of a sequel to a much more elementary post some years ago. I know a lot more now, and have also apparently gotten a lot more verbose.) Maybe they are well-known to some people, or maybe not, but they were at least interesting to me.

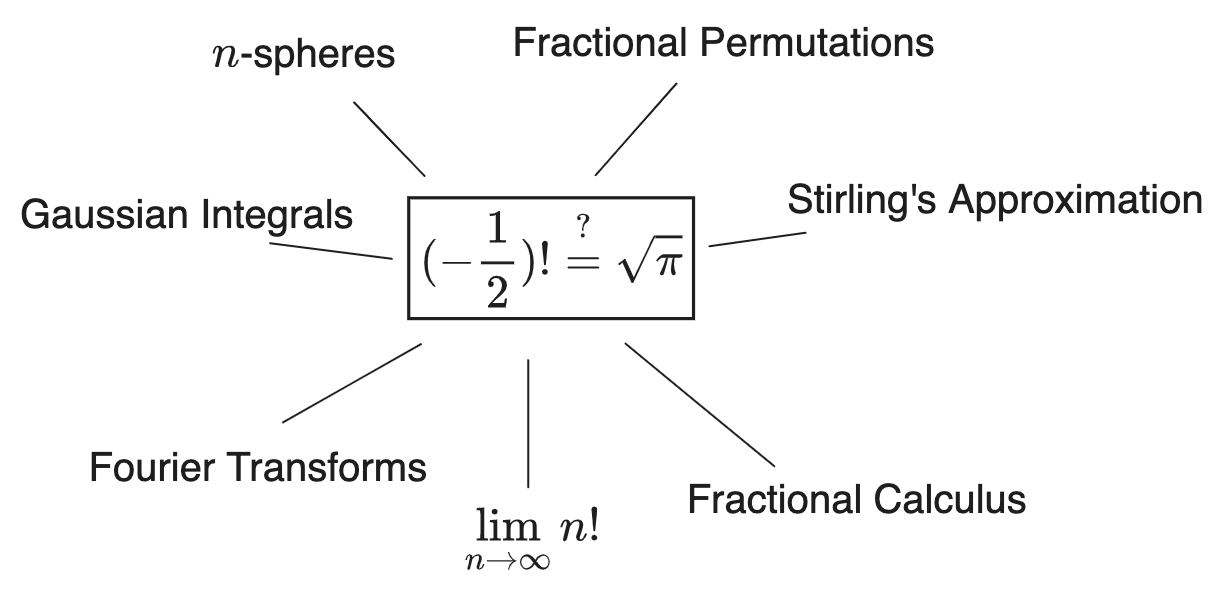

Still more investigation into why \((-\frac{1}{2})! = \sqrt{\pi}\). Now we are circling in on the real question, which is: what could it possibly mean to take a permutation of a fractional number of elements?

At this point I am not recounting regular mathematics at all but instead just meandering around my own thoughts on the subject. Read on if that’s interesting to you, but be warned, this is more-or-less a stream of consciousness. If I find anything good I’ll summarize it later.

Another installment in my investigations into the confusing value \(\Gamma(\frac{1}{2}) = (-\frac{1}{2})! = \sqrt{\pi}\).

This time, we survey a bunch of other places that \(\Gamma(\frac{1}{2})\) shows up, in order to fill out our board of clues.

There’s not a well-defined question here, really; it’s just trying to get to the bottom of something. The best way to describe it concisely would be:

Why, morally, does \((-\frac{1}{2})!\) equal \(\sqrt{\pi}\)?

(Always with the caveat that is does not, necessarily: \(\Gamma(x)\) is the best interpolation of \(x!\) to non-integers, but it’s not the only one, since any function which is zero on \(\bb{N}\) can be multiplied by it, giving a “pseudogamma function”.)

We know why it holds algebraically, but otherwise it remains a mostly mysterious fact—connected to lots of things but not exactly “explained” by any of them. If we can find an answer, maybe it will shed light on all the places that \(\pi\) and \(\Gamma\) show up. Or maybe not! Who knows.

Why care about any of this? Well, just for fun, mostly. It’s mostly about recording the chains of thought for posterity. This kind of inquiry tends to seem completely self-explanatory to some people and absurd to others. Personally I just enjoy it; it feels more “significant” to search for the philosophical meaning of a thing than to look for a new identity or proof or something like that. And who knows, maybe we’ll discover something useful along the way.

In which we try to figure out what what’s going on with double-factorials.

This was formerly part of the previous post about \(n\)-spheres, but I started adding things to it and decided to split them up. It is not necessary to read the original previous post first, but it is sort of a sequel, since it’s the direction my investigation has gone. Both articles are essentially unwieldy dumps for notes and calculations that I’ve done and wanted a record of, but maybe they’ll be useful as a survey if anyone else is curious about the same stuff and happens to come across this.

My main finding is that I now believe we should be thinking of factorials as multiplicative integrals, like this:

\[\frac{n!}{m!} = \prod_m^n d^{\times}(x!)\]And in particular, the factorials we’re used to have an implicit lower bound on that integral: the value \(n!\) is really \(\frac{n!}{0!} = \prod_0^n d^{\times}(x!)\). This means we never really “see the value” of \(0!\), because \(0!\) is equivalent to \(0!/0! = 1\). This interpretation seems to remove a bunch of ambiguity in the various definitions/analytic continuations of factorials on non-integer numbers, as well as explaining why those definitions don’t mess up the usual combinatoric sense of factorials. It also completely tidies up the arguments for why \(0! = 1\).

The Wikipedia articles \(n\)-sphere and Volume of an \(n\)-ball and Volume of a unit sphere are not great references on the basic formulae because all the information is spread out among them in un-useful ways. I went to write my own reference… but then I got annoyed by the shape of the formulae themselves, with their strange Gamma functions and unintuitive \(\sqrt{\pi}\) factors, so I starting trying to refactor them. It didn’t all fall into place, unfortunately, but there were some discoveries that I found interesting (which are probably out there in the literature, or not, I don’t know). I thought I’d post it anyway because I still feel like there’s something here that I’m grasping around for and I’d like to save the progress I made.

Special edition / rare vintage math facts that I found interesting. Not your everyday stuff. Somewhat kooky. Grain of salt, etc.

I came across this article, about the following counterintuitive partial derivative identity for a function \(u(v,w)\):

\[\begin{aligned} (\frac{\p u}{\p v} \|_w) (\frac{\p v}{\p w} \|_u) (\frac{\p w}{\p u} \|_v) = -1 \end{aligned}\](Each term is assumed to be non-zero.) More succinctly:

\[\frac{\p u}{\p v} \frac{\p v}{\p w} \frac{\p w}{\p u} = -1\]Or, with \(u_v = \p u(v, w)/\p v\), as:

\[u_v v_w w_u = -1\]It can be found on Wikipedia under the name Triple Product Rule.

The reason to care about this (besides that it’s sometimes useful in calculations) is that it seems a bit perplexing that the minus sign is there. Shouldn’t these fractions sorta cancel? Yes, we all learned that derivatives aren’t really fractions… but they definitely act like fractions a bit, certainly more often than they don’t act like fractions, and it’s odd that in this case they seem to act like the opposite of fractions. We’d like to repair our intuition somehow.

Although Baez’s article does justify this identity in a few different ways, there’s still something puzzling there; the answers are not quite satisfactory. I don’t like when there’s some fact of basic calculus which does immediately correspond to intuition (without clearly being an artifact of the formalism). So I thought I would try to get to the bottom of things.

While there are four standard ways of multiplying vectors and each has its own notation (\(\cdot\), \(\times\), \(\^\), \(\o\)), there is no generally-agreed-upon definition or notation for dividing vectors. That’s mostly because it’s not a thing. But there are times when I wish it was a thing. Or rather, there are too many times where a notion of division would be useful to ignore. There’s an operation which acts a lot like division, and which comes up more often than you might notice if you weren’t looking for it. And it does, in a sense, generalize scalar division. So why not?

This article describes what I would kinda like the notation \(\b{b} / \b{a}\) should mean. I’m writing it out primarily because I keep wanting to refer to it in other articles; this way I have something to link to instead of defining it inline each time. I don’t mean to claim that this “is” vector division. Rather it’s a thing that is sufficiently common that it makes sense to generalize the notation of division for.

It has come to my attention (actually I think I noticed this a long time ago and then forgot…) that there is a correct answer as to the convention used for spherical coordinates.

The options are:

the physics way

the math way

It turns out that there is a right answer. The mathematicians are right (for once).

The reason is simple. Just look at them:

\(\LARGE{\theta \, \, \, \phi}\)

They’re fricking pictures of which angle they are.

That’s right. I do not care that physics has always been doing it the other way, or even that all the reference books about spherical harmonics disagree (what a weird objection). There is only one right answer and it will be easy for everyone to remember. Please update your textbooks. We can’t fix everything (the electron will always be negatively charged, shoot) but perhaps we can fix this.

In which we attempt to better understand the classic multivariable calculus optimization problem.

Here’s a dubious idea I had while playing with using delta functions to perform surface integrals. Also includes a bunch of cool tricks with delta functions, plus some exterior algebra tricks that I’m about 70% sure about. Please do not expect anything approaching rigor.

Every once in a while the internet gets talking about Geometric Algebra (henceforth GA) and how it’s a new theory of math that fixes everything that’s wrong with linear algebra and multivariable calculus. When I come across this stance I am compelled to respond with something like: “wait wait, it’s not true! GA is clearly onto something but there’s also a lot wrong with it. What you probably want is just the concepts of multivectors and the wedge product!” Which is not very effective, because it takes a long time to convince anyone why, and it’s also not very productive, because this just keeps happening over and over without anything changing.

Many people agree with me on this, but they deal with it by mostly ignoring GA instead of complaining about it. But I actually like what GA is trying to do and I want it to succeed. So today I’m going to actually make those points in a longer article that I can link to instead.

Specifically what I have a problem with is that the subject is pretty clearly flawed and needs serious work, and especially that the culture around it does not seem to realize this or be interested in addressing those flaws. In particular: Hestenes’ Geometric Product is not a very good operation and we should not be rewriting all of geometry in terms of it. For some reason GA is obsessed with the geometric product, and it’s causing all sorts of problems. They act like this is clearly the way that geometry should be done and everyone else can’t just see it yet, and they have this weird religious zeal about it that is problematic and offputting. It’s also just ineffective: treating certain models as if they are somehow canonical and obvious is wrong, mathematically and socially, and it puts people off right from the start. There probably is a place for the geometric product in a grand theory of geometry, but it’s not front-and-center like GA has it today, and as a result the theory is a lot less compelling than it could be.

There’s an identity in electromagnetism which has been bugging me since college.

Gauss’s law says that the divergence of the electric field is equivalent to the charge distribution: \(\del \cdot \b{E} = \rho\). But in order to use this for a point charge—which is the most basic example in the subject!—we already don’t have the mathematical objects we need to calculate the divergence on the left or to represent the charge distribution on the right.

After all, the field of a point charge has to be \(\b{E} = q \hat{\b{r}}/4 \pi r^2\), and since its charge should be concentrated at a point it has to be a delta function: \(\del \cdot (q \hat{\b{r}}/4 \pi r^2) = q \delta(\b{x})\). In your multivariable-calculus-based E&M class you might mention this briefly, at best. Yet it is… kinda weird? And important? It feels like it should make a basic fact that lives inside of a larger intuitive framework of divergences and delta functions and everything else.

Here’s some stuff about delta functions I keep needing to remember, including:

For a generic linear equation like \(ax = b\) the solutions, if there are any, seem to always be of the form

\[x = a_{\parallel}^{-1} (b) + a_{\perp}^{-1} (0)\]regardless of whether \(a\) is “invertible”. Here \(a_{\parallel}^{-1}\) is a sort of “parallel inverse”, in some cases called the “pseudoinverse”, which is the invertible part of \(a\). \(a_{\perp}^{-1}\) is the “orthogonal inverse”, called either the nullspace or kernel of \(a\) depending what field you’re in, but either way it’s the objects for which \(ax = 0\). Clearly \(ax = a (a_{\parallel}^{-1} (b) + a_{\perp}^{-1} (0)) = a a_{\parallel}^{-1} (b)\), and that’s the solution if one exists.

This pattern shows up over and over in different fields, but I’ve never seen it really discussed as a general phenomenon. But really, it makes sense: why shouldn’t any operator be invertible, as long as you are willing to have the inverse (a) live in some larger space of objects and (b) possibly become multi-valued?

Here are some examples. Afterwards I’ll describe how this phenomenon gestures towards a general way of dividing by zero.

A classic use of data science at a technology company is a feature A/B test to improve revenue or engagement metrics.

Look, a list of simple things that you and your colleagues should know to avoid, but which you will still do by accident from time-to-time and eventually spend months of your life, in total, tracking down.

Don’t worry, these are all a bit more interesting than React 101 stuff like “don’t write conditional hooks”.

Many more words about React.js. Previously: The Zen of React.

As you may know: in 2018ish, the React team added “hooks” to the library. From the beginning hooks have been presented as a new, better thing which would gradually take the place of class components. This was very strange and controversial at the time, and it still is, judging by the comment sections complaining about it every other week.

Common frustrations about hooks: they’re confusing, they’re clunky, they’re unnecessary, they’re difficult to use correctly. All of these are true. But I think hooks are great and that they’re the future of programming. This article, hopefully one of a series, attempts to get you to agree with that statement. It’s about what hooks are and why they are how they are.

In particular I think it really helps to see a hook written out as a simple programming exercise, in order to understand exactly how they solve the problem that they are trying to solve. When you do this, and understand what that problem is, you can see that, while hooks aren’t even a great solution to that problem, they are at least better than classes, and therefore they are a step in the right direction.

I am hoping to write a series of posts about React.js: why it’s great, why hooks are great but also confusing, and then maybe what all is wrong with it and what can be done. These are largely perspectives I came to hold while working as a frontend developer at Dropbox for the last few years (I quit earlier this year, though, for… reasons.)

For posterity: here’s a little Python script I wrote that takes an image file and pads it with white space (or whatever color) to make it have a certain aspect ratio.

It’s very very bad right now.

More exterior algebra notes. This is a reference for (almost) all of the many operations that I am aware of in the subject. I will make a point of giving explicit algorithms and an explicit example of each, in the lowest dimension that can still be usefully illustrative.

Rapid-fire non-rigorous intuitions for calculus on complex numbers. Not an introduction, but if you find/found the subject hopelessly confusing, this should help.

QM is harder to understand that it needs to be because people are reluctant to write down explicit examples of all of the objects that you talk about. They’ll write a whole textbook about operators and observables and their eigenvalues when they act on the wave function, but they won’t just write down an example of a wave function that has those properties.

This is my attempt at fixing that. I’m trying to learn QFT and it helps to have the prerequisites compressed into the simplest possible representation. It also helps me to write everything down in a compressed form so I can reference it more easily.

This will make no sense if you don’t already have a good understanding of quantum mechanics. Also it might be kinda wrong-ish, but I bet it will help.

Conventions: \(c = 1\), \(g_{\mu \nu} = \text{diag}(+, -, -, -)\). I like to write \(S_{\b{x}}\) for \(\nabla S\).

The so-called Born Rule of quantum mechanics says that if a system is in a state \(\alpha \| 0 \> + \beta \| 1 \>\), upon ‘measurement’ (in which we entangle with one or the other outcome), we measure the eigenvalue associated with the state \(\| 0 \>\) with probability

\[P[0] = \| \alpha \|^2\]The Born Rule is normally included as an additional postulate in QM, which is somewhat unsatisfying. Or at least, it is apparently difficult to justify, given that I’ve read a bunch of attempts, each of which talks about how there haven’t been any other satisfactory attempts.

Anyway here’s an argument I came up with which seems somewhat compelling. It argues that the Born Rule can emerge from interference if you assume that every measurement of a probability that you’re exposed to (which I guess is a Many-Worlds-ish idea) is assigned a random, uncorrelated phase.

Most of our descriptions of how our brains work are fundamentally vague. We speak of our brains performing verbs like “think”, “realize”, “forget”, or “hope” but we aren’t talking about what’s going on mechanically to result in those qualities.

Sure, these can all be assigned truth values, in the sense that if everyone generally agrees that someone ‘realized’ something, we might define their brain to have performed the objective act of ‘realization’. But this gives no technical understanding of what the process of realization is – beyond, perhaps, some hand-wavey story about connections being bridged between neurons.

So, sometime in the last few years the English-speaking Internet became aware of the condition called aphantasia. Aphantasia is when a person is unable to picture images in their thoughts – they don’t have a “mind’s eye” at all.

This is interesting because, in contrast to the above, aphantasia is a concrete description of how the brain works. Some people see an image in their head when they draw or recall something; others don’t. Their brains work in materially different ways. I would have no idea how to figure out if two people “realize” something via different mechanisms, but I can be sure that two people’s brains operate differently, if one sees pictures and the others don’t.

More on simplexes in oriented projective geometry (OPG), since they are connected to everything else. See the previous post for details on OPG.

In the previous post in this series, I looked at how some operations related to exterior algebra (wedge / ‘join’, ‘meet’, interior product, Hodge Star, geometric product) roughly correspond to set operations (union, intersection, subtraction, complement, symmetric difference), but the analogy wasn’t very good. Exterior algebra isn’t exactly linearized set algebra. So what is it?

Well, I found another subject that’s a much closer fit: oriented projective geometry (henceforth ‘OPG’).

Vector spaces are assumed to be finite-dimensional and over \(\bb{R}\). The grade of a multivector \(\alpha\) will be written \(\| \alpha \|\), while its magnitude will be written \(\Vert \alpha \Vert\). Bold letters like \(\b{u}\) will refer to (grade-1) vectors, while Greek letters like \(\alpha\) refer to arbitrary multivectors with grade \(\| \alpha \|\).

You may have noticed that the behavior of the wedge product on pure multivectors is to append them as lists: \(\b{wx} \^ \b{yz} = \b{wxyz}\), where some signs come in if you’d rather have the terms in a different order. This could also be interpreted as taking their union as sets. Either way, \(\^\) seems to act like a union or concatenation operation combined with a bonus antisymmetrization step which has the effect of making duplicate terms like \(\b{x \^ x}\) vanish.

Vector spaces are assumed to be finite-dimensional and over \(\bb{R}\). The grade of a multivector \(\alpha\) will be written \(\| \alpha \|\), while its magnitude will be written \(\Vert \alpha \Vert\). Bold letters like \(\b{u}\) will refer to (grade-1) vectors, while Greek letters like \(\alpha\) refer to arbitrary multivectors with grade \(\| \alpha \|\).

More notes on exterior algebra. This time, the interior product \(\alpha \cdot \beta\), with a lot more concrete intuition than you’ll see anywhere else, but still not enough.

I am not the only person who has had trouble figuring out what the interior product is for. This is what I have so far…

Vector spaces are assumed to be finite-dimensional and over \(\bb{R}\). The grade of a multivector \(\alpha\) will be written \(\| \alpha \|\), while its magnitude will be written \(\Vert \alpha \Vert\). Bold letters like \(\b{u}\) will refer to (grade-1) vectors, while Greek letters like \(\alpha\) refer to arbitrary multivectors with grade \(\| \alpha \|\).

Here is a survey of understandings on each of the main types of Taylor series:

I thought it would be useful to have everything I know about these written down in one place.

Particularly, I don’t want to have to remember the difference between all the different flavors of Taylor series, so I find it helpful to just cast them all into the same form, which is possible because they’re all the same thing (seriously why aren’t they taught this way?).

These notes are for crystallizing everything when you already have a partial understanding of what’s going on. I’m going to ignore discussions of convergence so that more ground can be covered and because I don’t really care about it for the purposes of intuition.

You may have seen that Youtube video by Numberphile that circulated the social media world a few years ago. It showed an ‘astounding’ mathematical result:

\[1+2+3+4+5+\ldots = -\frac{1}{12}\](quote: “the answer to this sum is, remarkably, minus a twelfth”)

Then they tell you that this result is used in many areas of physics, and show you a page of a string theory textbook (oooo) that states it as a theorem.

The video caused a bit of an uproar at the time, since it was many people’s first introduction to the (rather outrageous) idea and they had all sorts of (very reasonable) objections.

I’m interested in talking about this because: I think it’s important to think about how to deal with experts telling you something that seems insane, and this is a nice microcosm for that problem.

Because, well, the world of mathematics seems to have been irresponsible here. It’s fine to get excited about strange mathematical results. But it’s not fine to present something that requires a lot of asterixes and disclaimers as simply “true”. The equation is true only in the sense that if you subtly change the meanings of lots of symbols, it can be shown to become true. But that’s not the same thing as quotidian, useful, everyday truth. And now that this is ‘out’, as it were, we have to figure out how to cope with it. Is it true? False? Something else? Let’s discuss.

(Not intended for any particular audience. Mostly I just wanted to write down these derivations in a presentable way because I haven’t seen them from this direction before.)

(Vector spaces are assumed to be finite-dimensional and over \(\bb{R}\))

Exterior algebra is obviously useful any time you’re anywhere near a cross product or determinant. I want to show how it also comes with an inner product which can make certain formulas in the world of vectors and matrices vastly easier to prove.

(This is not really an intro to the subject. I don’t have an audience in mind for this. I’ve written my notes out in an expository style because it helps me retain what I study.)

(Vector spaces are assumed to be finite-dimensional and over \(\bb{R}\) with the standard inner product unless otherwise noted.)

Exterior algebra (also known as ‘multilinear algebra’, which is arguably the better name) is an obscure and technical subject. It’s used in certain fields of mathematics, primarily abstract algebra and differential geometry, and it comes up a lot in physics, often in disguise. I think it ought to be far more widely studied, because it turns out to take a lot of the mysteriousness out of the otherwise technical and tedious subject of linear algebra. But most of the places it turns up it is very obfuscated. So my aim is to study exterior algebra and do some ‘refactoring’: to make it more explicit, so it seems like a subject worth studying in its own right.

In general I’m drawn to whatever makes computation and intuition simple, and this is it. In college I learned about determinants and matrix inverses and never really understood how they work; they were impressive constructions that I memorized and then mostly forgot. Exterior Algebra turns out to make them into simple intuitive procedures that you could rederive whenever you wanted.

Here’s a summary of the concept of oriented area and the “shoelace formula”, and some equations I found while playing around with it which are not novel. I wanted to write this article because I think the concept deserves to be better popularized, and it is useful to me to have my own reference on the subject.

Several resources I have found on the subject, including Wikipedia, all cite a 1959 text entitled Computation of Areas of Oriented Figures by A.M. Lopshits, which was originally printed in Russian and translated to English by Massalski and Mills, but is apaprently not available online. I did find a copy via university library, so I thought I would summarize its contents in the process in order to make them available to a casual Internet reader. TLDR: it’s not worth finding a copy of that book. It’s fairly elementary and doesn’t contain ideas that can’t be found elsewhere. But it does have a few cool problems in it.

I also took this chance to practice making nice math diagrams. Which went okay, but gosh is it ever not worth the effort.

A friend is writing her master’s thesis in a subfield where data is typically summarized using geometric statistics: geometric means (GMs) and geometric standard deviations (GSDs), and sometimes even geometric standard errors (GSEs), whatever those are. Oh and occasionally also ‘geometric confidence intervals’ and ‘geometric interquartile ranges’.

…Most of which are (a) not something anyone really has intuition for and (b) surprisingly hard to find references for online, compared to regular ‘arithmetic’ statistics.

I was trying to help her understand these, but it took a lot of work to find easily-readable references online, so I wanted to write down what I figured out.

(later edit: I wish I had saved the sources though.)

A rant.

My bike was stolen out of the backyard last night, so I’m feeling a little more aggravated by everything than usual.

This has had the effect of reminding me of a recurring sensation in my life as a software developer: that dealing with technology can be a fundamentally miserable experience, and that the skill of being ‘good’ at software is often mostly the same skill as being able to take a lot of crap from faceless, abusive machines in ways that you feel powerless to do anything about.

So while I’m all for the “let’s teach everybody to code!” movement, I do sometimes wish we’d stop writing yet another Learn Machine Learning With Python Tutorial, or whatever, and just make maybe take some time to work on making everything the world around us better in little incremental ways, by making what we’ve already got suck less, for ourselves and for all the newcomers and for just everyone, so we can have less stress and more peace in our lives.

Basically some days I can’t honestly tell anyone they should get into this, when on a good day you get to slowly hack your way through bullshit and on a bad day you might just succumb and give up.

Some thoughts on Taylor series.

We can often write a differentiable function \(f(x)\) as a Taylor series around a point \(x\), approximating it in terms of its derivatives at that point:

\[f(x+a) = \sum_{0}^{\infty} \frac{a^{n} f^{(n)}(x) }{n!}\]And, under certain conditions, this series will converge exactly to the values of the function at nearby points.

(Only interesting if you already know some things about information theory, probably)

(Disclaimer: Notes. Don’t trust me, I’m not, like, a mathematician.)

I have been reviewing concepts from Information Theory this week, and I’ve realized that I never quite really understood what Shannon Entropy was all about.

Specifically: entropy is not a property of probability distributions per se, but a property of translating between different representations of a stream of information. When we talk about “the entropy of a probability distribution”, we’re implicitly talking about the entropy of a stream of information that is produced by sampling from that distribution. Some of the equations make a lot more sense when you keep this in mind.